At some point in every AI workshop, someone asks the question.

“But isn’t it just… Googling? Like, it searches a database and finds the answer?”

It’s a reasonable guess. The output looks like retrieval — you ask something, it responds. But the mechanism is completely different, and getting this wrong shapes everything else people think about AI.

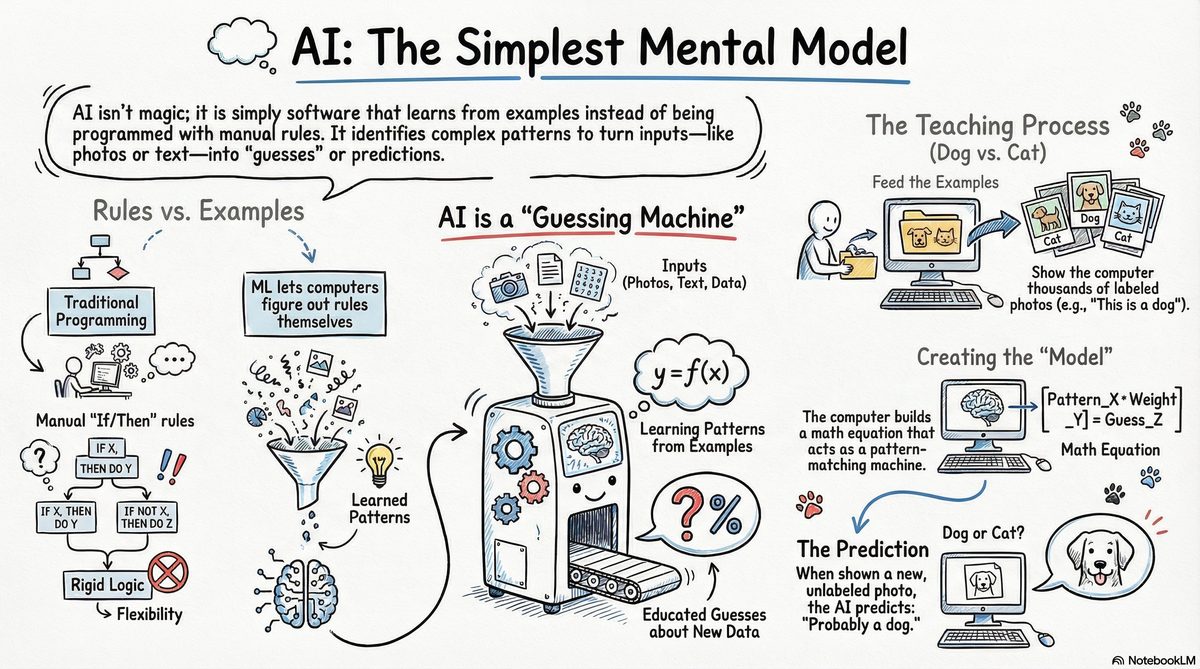

Not rules. Examples.

Traditional programming works like this: a programmer writes explicit rules, the computer follows them. If temperature exceeds 30 degrees, turn on the fan. Every behaviour is a rule someone wrote.

Machine learning flips this. Instead of writing rules, you feed the system examples — thousands, millions of them — and let it figure out the rules itself. You don’t tell it what to look for. You show it, over and over, and it finds patterns you didn’t even know existed.

The computer isn’t searching a database. It’s not retrieving a stored answer. It’s computing a new one, based on patterns it absorbed during training.

The dog and the cat

The example that usually lands: teaching a child to recognise dogs.

You don’t sit down and explain ear geometry. You don’t describe fur texture or nose proportion. You show photos. “Dog.” “Cat.” “Dog.” Enough times, and they just know — through exposure, not explanation.

Machine learning works exactly this way. A million labelled images go in. The system finds patterns at a level of detail no human could articulate. Then you show it a new photo it’s never seen, and it says: probably dog.

That guess is called a prediction. The system that produces it is called a model.

What a model actually is

A model is a very large math equation. Input goes in, prediction comes out.

Photo → “cat”. Email → “spam”. Voice → text. Text → answer.

The equation isn’t written by a programmer — it’s discovered through training. Billions of tiny adjustments, made while the system worked through examples and checked itself against the right answers.

This is why it can do things no rule-based system could. Nobody wrote down every possible way a spam email can look. The model found the pattern itself.

Where “AI” sits in all this

People use AI as a big umbrella. Underneath it:

Machine learning is how it learns. Neural networks are a popular structure for building ML systems. Deep learning is what happens when those networks get very large. LLMs — large language models, the thing powering ChatGPT — are deep learning systems trained specifically on text.

So when someone says “AI is just a database”, they’re describing something closer to a 1990s search engine. What we have now is different in kind, not just in scale.

The one-sentence version

AI is software that learns from examples instead of being programmed with rules.

Everything else is detail on top of this.

The misconception matters because it shapes expectations. If you think AI is a lookup table, you’ll be surprised by everything — both the things it gets right and the things it gets catastrophically wrong. Understanding that it’s a pattern-matcher trained on data tells you something real: why it’s unreliable on facts it wasn’t trained on, why it sounds confident while being wrong, why it behaves differently from a calculator.

It’s not magic. It’s not a database.

It’s a very complicated guessing machine that got very good at guessing.